Pandas is a highly utilized data science library for the Python programming language. One of the many reasons Pandas has become the de facto data processing library is the ease with which it allows developers to find and replace missing values in datasets.

In this short article, you’ll learn how to inspect a dataset using Pandas and identify any potential missing data, how to replace those data, and the functions Pandas afford for the many options of doing so.

TL;DR – Pandas provides several methods for replacing missing data. Note the use of the inplace argument. That transforms the DataFrame object without creating another copy in memory. Here’s a basic example of each:

import pandas as pd # Parse data with missing values # as Pandas DataFrame object df = pd.DataFrame(dirty_data) # Replace with 0 values. Accepts regex. df.replace(np.NAN, 0, inplace=True) # Replace with zero values df.fillna(value=0, inplace=True) # Replace with interpolated value. df.interpolate(inplace=True)

Introduction

Pandas is one of the defacto data science libraries for Python and for good reason. This library makes replacing missing values in one’s data a breeze. The DataFrame class is host to several methods designed specifically for this use case. In this article, we’ll cover three of the most common methods used to replace missing data in Pandas. We’ll take a stepwise approach covering the following stages:

- Obtain data with missing values

- Check data for missing values

- Replace missing values

These are three basic concepts but I find it important to have an explicit step-by-step approach to dealing with what is often a very messy situation. Fortunately, Pandas doesn’t require any complicated syntax to move mountains of data.

Step 1: Generate/Obtain Data with Missing Values

For this tutorial, we’ll be using randomly generated TimeSeries data with a date and random integer value. Several of the integer values have been purposefully corrupted resulting in NaN values after loading into pandas. These values are those that Pandas is going to fix for us in the next step. The data used in this article can be generated via the code below or downloaded from here (sequence of missing values may vary.)

import pandas as pd

# Generate series from start of 2018 to end of 2020

series = pd.date_range(start='2018-01-01', end='2020-12-31', frequency='d')

# Convert to DataFrame object

series = pd.DataFrame(series, columns=['date'])

# Set index

series.set_index('date', inplace=True)

# Create series of random integers with some None values

ints = [random.choice(list(range(10)) + [None]) for x in range(len(series))]

# Add values to DataFrame series object as new column

series['nums'] = nums

# Inspect result

>>> series

nums

date

2018-01-01 NaN

2018-01-02 3.0

2018-01-03 9.0

2018-01-04 5.0

2018-01-05 2.0

... ...

2020-12-27 2.0

2020-12-28 8.0

2020-12-29 7.0

2020-12-30 3.0

2020-12-31 5.0

[1096 rows x 1 columns]

Here we see that the first value for our time series was given a randomly selected NaN value (representing a None object in Python). This value, along with identical NaN entries, will represent the missing data we’ll be using Pandas to replace. If you wish to save such data for convenience the DataFrame.to_csv() method is recommended.

Note: The code above is available as a function definition on Github here.

Step 2: Check for Missing Data

Checking for missing data is an essential step in any analytical pipeline. Pandas offers several convenient methods to do this, each with varying specificity and utility. The following three methods are useful:

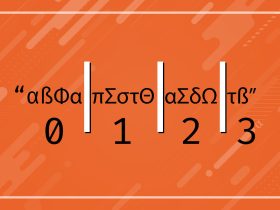

DataFrame.isnull()– replaces all data with boolean values such that False indicates missing data. Best suited for granular assessment of specific data ranges;DataFrame.isnull().values.any()– returns a boolean indicating if the entire dataset includes any missing data. Great for quick assessment;DataFrame.isnull().sum()– returns a total count of missing values for each column and datatype. Great balance of holism and specificity.

Below is an example usage of each of these methods and their resulting descriptions of our time series data:

# Replace missing values with Booleans

>>> series.isnull()

nums

date

2018-01-01 False

2018-01-02 False

2018-01-03 True

2018-01-04 False

2018-01-05 False

... ...

2020-12-27 True

2020-12-28 False

2020-12-29 False

2020-12-30 False

2020-12-31 False

[1096 rows x 1 columns]

# Check entire set for any missing values

>>> series.isnull().values.any()

True

# Check for total count of missing datum

>>> series.isnull().sum()

nums 88

dtype: int64

There are a handful of other methods available for the DataFrame.isnull() method that are described in the official Pandas documentation. For more information on the values().any() method see the official NumPy documentation for the np.array object. Now that we know our data contains missing values we can formulate an approach to begin replacing the data as we best see fit.

Quick Aside: isnull() vs. isna()

Pandas offers two methods for the DataFrame object that both detect null or NaN values. The isnull() and isna() methods both do exactly the same thing. Don’t believe me? Check out the source code here. These methods can be used interchangeably with DataFrame instances. One possible reasoning behind this design is the developers of Pandas offering a hat-tip to Ruby (a popular data science language) treats NaN (NA) and null values differently—just my best guess!

Step 3: Replace the Missing Data

Pandas offers several utilities that are useful in the replacement of missing data. Here we’ll cover three common methods:

DataFrame.fillna()– FillsNaNvalues with a specified replacement value. Several optional keyword arguments of whichinplace: bool‘ adds the replacement value without copying theDataFrameobject.DataFrame.replace()– Replaces a specified value with an alternative. Not strictly limited toNaNreplacements.DataFrame.interpolate()– FillsNaNvalues with interpolated values generated by a specified method. e.g. linear, time, index.

Let’s see how each of these methods of replacing missing data in Pandas works with our Time Series data. First up, let’s try out the fillna() method:

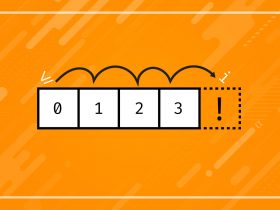

Option 1: DataFrame.fillna()

Still using our data before, we can fill the NaN values using the fillna() method in one of several ways. First, let’s use only the required argument value to replace the NaN values.

# Count NaN values >>> series.isnull().sum() # Result nums 89 dtype: int64 # Replace NaN with value of 1, recount >>> series.fillna(value=1) >>> series.isnull().sum() # Result nums 89 dtype: int64

It looks like nothing has changed in our data and our NaN values persisted. This is because the fillna() method returns a copy of our DataFrame object. Without assigning that object to a variable we have no way to reference it again.

To avoid this pitfall, simply assign the return as such: series = series.fillna(value=1). Alternatively, you can use a different variable name rather than overwriting the initial series object. Another approach is to simply make use of the in_place argument offered by fillna() as such:

# Check our data for NaN again >>> series.isnull().sum() # Result nums 113 dtype: int64 # Use fillna() w/in_place set to True >>> series.fillna(value=1, in_place=True) # Result nums 0 dtype: int64

This approach is more elegant in my opinion but does eliminate access to the original DataFrame object—and the original data—keep that in mind. Time Series data is linear data and often reflects a strong linear correlation.

Replacing NaN values with a static value may not be well-suited for later analysis. In such cases, replacing values with a calculated value may be better suited. The fillna() method offers several built-in options for this. Here we’ll be using the bfill option to replace NaN values with the last value in the Time Series:

# Print a representation of our data

>>> series

# Result

nums

date

2018-01-01 7.0

2018-01-02 4.0

2018-01-03 1.0

2018-01-04 5.0

2018-01-05 7.0

... ...

2020-12-27 0.0

2020-12-28 NaN

2020-12-29 4.0

2020-12-30 3.0

2020-12-31 2.0

[1096 rows x 1 columns]

# Fill NaN values using 'ffill' method

>>> series.fillna(method='ffill', inplace=True)

# Result

nums

date

2018-01-01 7.0

2018-01-02 4.0

2018-01-03 1.0

2018-01-04 5.0

2018-01-05 7.0

... ...

2020-12-27 0.0

2020-12-28 0.0

2020-12-29 4.0

2020-12-30 3.0

2020-12-31 2.0

[1096 rows x 1 columns]

Note that we have the same number of columns and all our values, other than the NaN were unaffected. The NaN was replaced by the 0.0 value before it (forward-filling). Check out the official Pandas documentation for a full list of method options as well as other keyword arguments that can be used.

The fillna() method can also use dynamic values as such: series.fillna(series.mean(), inplace=True). This will replace all NaN values for each column in a DataFrame with the average value for that column. That can be incredibly useful but, again, might influence correlation values by an unintended magnitude.

Option 2: DataFrame.replace()

The replace() method is similar to the fillna() method but offers a different set of options. Differences between fillna() and replace() can be characterized by noting the replace() method accommodates the use of regular expressions for searching and replacing values. Check out the official Pandas Documentation for a fuller explanation.

This can be useful in cases where data isn’t missing but may be of improper format. For example, a list of emails of which some contain [at] instead of the @ symbol. We’ll not be covering this method in depth given its similarity to the fillna() method but a basic example can be seen below:

# View our initial series data

>>> series

# Result

nums

date

2018-01-01 NaN

2018-01-02 3.0

2018-01-03 2.0

2018-01-04 NaN

2018-01-05 2.0

... ...

2020-12-27 1.0

2020-12-28 NaN

2020-12-29 4.0

2020-12-30 1.0

2020-12-31 3.0

# Replace all NaN values with 1's

>>> series.replace(to_replace=np.NAN, value=1, inplace=True)

# Result

[1096 rows x 1 columns]

nums

date

2018-01-01 1.0

2018-01-02 3.0

2018-01-03 2.0

2018-01-04 1.0

2018-01-05 2.0

... ...

2020-12-27 1.0

2020-12-28 1.0

2020-12-29 4.0

2020-12-30 1.0

2020-12-31 3.0

[1096 rows x 1 columns]

Option 3: DataFrame.interpolate()

The Pandas DataFrame object offers a powerful interpolation method to fix missing data with values more congruent to valid data. Quickly; interpolation is a method of estimation based on values within a [usually] nearish range of numbers. Check out this article by ArcGIS for a nice visual description of interpolation. Let’s see how the DataFrame.interpolate() function can help fix our missing data:

# View our initial data

series.head(15)

# Result

nums

date

...

2018-01-07 2.0

2018-01-08 1.0

2018-01-09 0.0

2018-01-10 NaN

2018-01-11 NaN

2018-01-12 1.0

2018-01-13 NaN

2018-01-14 1.0

2018-01-15 0.0

# Replace NaN values via interpolate

>>> series.interpolate(inplace=True)

# Result

nums

date

...

2018-01-07 2.000000

2018-01-08 1.000000

2018-01-09 0.000000

2018-01-10 0.333333 # interpolated

2018-01-11 0.666667 # interpolated

2018-01-12 1.000000

2018-01-13 1.000000 # interpolated

2018-01-14 1.000000

2018-01-15 0.000000

Here I’ve done things a little bit differently. I’ve used the DataFrame.head() method to show a more congruent series of values for illustration. You can see that Pandas uses the values before and after the NaN values to create a sort of average.

This is a linear estimate and has applications in many areas of statistics, machine learning, and mathematics. Pandas offers several other interpolation methods that can be used by passing an appropriate keyword. Check out the official documentation for more info.

Final Thoughts

We’ve seen the basic approaches to finding and replacing missing values in Pandas. These methods and approaches will handle the basic cases of data wrangling one might encounter. While more advanced methods and pipelines are needed for most data-processing workflows these methods and approaches can still be integrated easily. Replacing a NaN is—usually—still a matter of replacing an NaN.